Knowledge

- Identify RAID levels

- Identify supported HBA types

- Identify virtual disk format types

Skills and Abilities

- Determine use cases for and configure VMware DirectPath I/O

- Determine requirements for and configure NPIV

- Determine appropriate RAID level for various Virtual Machine workloads

- Apply VMware storage best practices

- Understand use cases for Raw Device Mapping

- Configure vCenter Server storage filters

- Understand and apply VMFS re-signaturing

- Understand and apply LUN masking using PSA-related commands

- Analyze I/O workloads to determine storage performance requirements

- Identify and tag SSD devices

- Administer hardware acceleration for VAAI

- Configure and administer profile-based storage

- Prepare storage for maintenance

-

Upgrade VMware storage infrastructure

Identify RAID levels.

Following is a brief textual summary of the most commonly used RAID levels. (Source: http://en.wikipedia.org/wiki/RAID)

- RAID 0 (block-level striping without parity or mirroring) has no (or zero) redundancy. It provides improved performance and additional storage but no fault tolerance. Hence simple stripe sets are normally referred to as RAID 0. Any disk failure destroys the array, and the likelihood of failure increases with more disks in the array (at a minimum, catastrophic data loss is twice as likely compared to single drives without RAID). A single disk failure destroys the entire array because when data is written to a RAID 0 volume, the data is broken into fragments called blocks. The number of blocks is dictated by the stripe size, which is a configuration parameter of the array. The blocks are written to their respective disks simultaneously on the same sector. This allows smaller sections of the entire chunk of data to be read off the drive in parallel, increasing bandwidth. RAID 0 does not implement error checking, so any error is uncorrectable. More disks in the array means higher bandwidth, but greater risk of data loss.

- In RAID 1 (mirroring without parity or striping), data is written identically to multiple disks (a “mirrored set”). Although many implementations create sets of 2 disks, sets may contain 3 or more disks. Array provides fault tolerance from disk errors or failures and continues to operate as long as at least one drive in the mirrored set is functioning. With appropriate operating system support, there can be increased read performance, and only a minimal write performance reduction. Using RAID 1 with a separate controller for each disk is sometimes called duplexing.

- In RAID 2 (bit-level striping with dedicated Hamming-code parity), all disk spindle rotation is synchronized, and data is striped such that each sequential bit is on a different disk. Hamming-code parity is calculated across corresponding bits on disks and stored on one or more parity disks. Extremely high data transfer rates are possible.

- In RAID 3 (byte-level striping with dedicated parity), all disk spindle rotation is synchronized, and data is striped such that each sequential byte is on a different disk. Parity is calculated across corresponding bytes on disks and stored on a dedicated parity disk. Very high data transfer rates are possible.

- RAID 4 (block-level striping with dedicated parity) is identical to RAID 5 (see below), but confines all parity data to a single disk, which can create a performance bottleneck. In this setup, files can be distributed between multiple disks. Each disk operates independently which allows I/O requests to be performed in parallel, though data transfer speeds can suffer due to the type of parity. The error detection is achieved through dedicated parity and is stored in a separate, single disk unit.

- RAID 5 (block-level striping with distributed parity) distributes parity along with the data and requires all drives but one to be present to operate; drive failure requires replacement, but the array is not destroyed by a single drive failure. Upon drive failure, any subsequent reads can be calculated from the distributed parity such that the drive failure is masked from the end user. The array will have data loss in the event of a second drive failure and is vulnerable until the data that was on the failed drive is rebuilt onto a replacement drive. A single drive failure in the set will result in reduced performance of the entire set until the failed drive has been replaced and rebuilt.

- RAID 6 (block-level striping with double distributed parity) provides fault tolerance from two drive failures; array continues to operate with up to two failed drives. This makes larger RAID groups more practical, especially for high-availability systems. This becomes increasingly important as large-capacity drives lengthen the time needed to recover from the failure of a single drive. Single-parity RAID levels are as vulnerable to data loss as a RAID 0 array until the failed drive is replaced and its data rebuilt; the larger the drive, the longer the rebuild will take. Double parity gives time to rebuild the array without the data being at risk if a single additional drive fails before the rebuild is complete.

Identify supported HBA types

There is a VMware document about all the supported HBA types. See the Storage/SAN Compatibility Guide on the VMware website. http://www.vmware.com/resources/compatibility/pdf/vi_san_guide.pdf

Identify virtual disk format types

The supported disk formats in ESX and ESXi are. (Source: VMware KB Article:1022242)

- zeroedthick (default) – Space required for the virtual disk is allocated during the creation of the disk file. Any data remaining on the physical device is not erased during creation, but is zeroed out on demand at a later time on first write from the virtual machine. The virtual machine does not read stale data from disk.

- eagerzeroedthick – Space required for the virtual disk is allocated at creation time. In contrast to zeroedthick format, the data remaining on the physical device is zeroed out during creation. It might take much longer to create disks in this format than to create other types of disks.

- thick – Space required for the virtual disk is allocated during creation. This type of formatting does not zero out any old data that might be present on this allocated space. A non-root user cannot create disks of this format.

- thin – Space required for the virtual disk is not allocated during creation, but is supplied and zeroed out, on demand at a later time.

- rdm – Virtual compatibility mode for raw disk mapping.

- rdmp – Physical compatibility mode (pass-through) for raw disk mapping.

- raw – Raw device.

- 2gbsparse – A sparse disk with 2GB maximum extent size. You can use disks in this format with other VMware products, however, you cannot power on sparse disk on a ESX host till you reimport the disk with vmkfstools in a compatible format, such as thick or thin.

- monosparse – A monolithic sparse disk. You can use disks in this format with other VMware products.

-

monoflat – A monolithic flat disk. You can use disks in this format with other VMware products.

Determine use cases for and configure VMware DirectPath I/O

Official Documentation: vSphere Virtual Machine Administration, Chapter 8, Section “Add a PCI Device in the vSphere Client”, page 149.

vSphere DirectPath I/O allows a guest operating system on a virtual machine to directly access physical PCI and PCIe devices connected to a host. Each virtual machine can be connected to up to six PCI devices. PCI devices connected to a host can be marked as available for passthrough from the Hardware Advanced Settings in the Configuration tab for the host.

Snapshots are not supported with PCI vSphere Direct Path I/O devices.

Prerequisites

- To use DirectPath I/O, verify that the host has Intel® Virtualization Technology for Directed I/O (VT-d) or AMD I/O Virtualization Technology (IOMMU) enabled in the BIOS.

- Verify that the PCI devices are connected to the host and marked as available for passthrough.

- Verify that the virtual machine is using hardware version 7 or later.

Procedure

- In the vSphere Client inventory, right-click the virtual machine and select Edit Settings.

- On the Hardware tab, click Add.

- In the Add Hardware wizard, select PCI Device and click Next.

- Select the passthrough device to connect to the virtual machine from the drop-down list and click Next.

- Click Finish.

More information

- VMware document Configuration Examples and Troubleshooting for VMDirectPath

- VMware KB article 1010789 Configuring VMDirectPath I/O pass-through devices on an ESX host.

- Petri IT Knowledgebase http://www.petri.co.il/vmware-esxi4-vmdirectpath.htm

Determine requirements for and configure NPIV

Official Documentation: vSphere Virtual Machine Administration, Chapter 8, Section “Configure Fibre Channel NPIV Settings in the vSphere Web Client / vSphere Client”, page 123. Detailed information can be found in vSphere Storage Guide, Chapter 4, “N-Port ID Virtualization, page 41”

N-port ID virtualization (NPIV) provides the ability to share a single physical Fibre Channel HBA port among multiple virtual ports, each with unique identifiers. This capability lets you control virtual machine access to

LUNs on a per-virtual machine basis.

Each virtual port is identified by a pair of world wide names (WWNs): a world wide port name (WWPN) and a world wide node name (WWNN). These WWNs are assigned by vCenter Server.

NPIV support is subject to the following limitations:

- NPIV must be enabled on the SAN switch. Contact the switch vendor for information about enabling NPIV on their devices.

- NPIV is supported only for virtual machines with RDM disks. Virtual machines with regular virtual disks continue to use the WWNs of the host’s physical HBAs.

- The physical HBAs on the ESXi host must have access to a LUN using its WWNs in order for any virtual machines on that host to have access to that LUN using their NPIV WWNs. Ensure that access is provided to both the host and the virtual machines.

- The physical HBAs on the ESXi host must support NPIV. If the physical HBAs do not support NPIV, the virtual machines running on that host will fall back to using the WWNs of the host’s physical HBAs for LUN access.

- Each virtual machine can have up to 4 virtual ports. NPIV-enabled virtual machines are assigned exactly 4 NPIV-related WWNs, which are used to communicate with physical HBAs through virtual ports. Therefore, virtual machines can utilize up to 4 physical HBAs for NPIV purposes.

Prerequisites

- To edit the virtual machine’s WWNs, power off the virtual machine.

-

Verify that the virtual machine has a datastore containing a LUN that is available to the host.

How NPIV-Based LUN Access Works

NPIV enables a single FC HBA port to register several unique WWNs with the fabric, each of which can be assigned to an individual virtual machine.

SAN objects, such as switches, HBAs, storage devices, or virtual machines can be assigned World Wide Name (WWN) identifiers. WWNs uniquely identify such objects in the Fibre Channel fabric. When virtual machines have WWN assignments, they use them for all RDM traffic, so the LUNs pointed to by any of the RDMs on the virtual machine must not be masked against its WWNs. When virtual machines do not have WWN assignments, they access storage LUNs with the WWNs of their host’s physical HBAs. By using NPIV, however, a SAN administrator can monitor and route storage access on a per virtual machine basis. The following section describes how this works.

When a virtual machine has a WWN assigned to it, the virtual machine’s configuration file (.vmx) is updated to include a WWN pair (consisting of a World Wide Port Name, WWPN, and a World Wide Node Name, WWNN). As that virtual machine is powered on, the VMkernel instantiates a virtual port (VPORT) on the physical HBA which is used to access the LUN. The VPORT is a virtual HBA that appears to the FC fabric as a physical HBA, that is, it has its own unique identifier, the WWN pair that was assigned to the virtual machine. Each VPORT is specific to the virtual machine, and the VPORT is destroyed on the host and it no longer appears to the FC fabric when the virtual machine is powered off. When a virtual machine is migrated from one host to another, the VPORT is closed on the first host and opened on the destination host.

If NPIV is enabled, WWN pairs (WWPN & WWNN) are specified for each virtual machine at creation time. When a virtual machine using NPIV is powered on, it uses each of these WWN pairs in sequence to try to discover an access path to the storage. The number of VPORTs that are instantiated equals the number of physical HBAs present on the host. A VPORT is created on each physical HBA that a physical path is found on. Each physical path is used to determine the virtual path that will be used to access the LUN. Note that HBAs that are not NPIV-aware are skipped in this discovery process because VPORTs cannot be instantiated on them.

Determine appropriate RAID level for various Virtual Machine workloads

There are some very interesting sites and documents to check out.

There is a VMware document about the best practices for VMFS, http://www.vmware.com/pdf/vmfs-best-practices-wp.pdf

The Yellow Bricks Blog article IOps?, http://www.yellow-bricks.com/2009/12/23/iops/

The VMToday blog article about Storage Basics – Part VI: Storage Workload Characterization, http://vmtoday.com/2010/04/storage-basics-part-vi-storage-workload-characterization/

All these blogs provide information about how to select the best RAID level for Virtual Machine workloads.

Apply VMware storage best practices

Official Documentation: Overview at: http://www.vmware.com/technical-resources/virtual-storage/best-practices.html Documentation can be found at: http://www.vmware.com/technical-resources/virtual-storage/resources.html

Many of the best practices for physical storage environments also apply to virtual storage environments. It is best to keep in mind the following rules of thumb when configuring your virtual storage infrastructure:

- Configure and size storage resources for optimal I/O performance first, then for storage capacity.

This means that you should consider throughput capability and not just capacity. Imagine a very large parking lot with only one lane of traffic for an exit. Regardless of capacity, throughput is affected. It’s critical to take into consideration the size and storage resources necessary to handle your volume of traffic—as well as the total capacity. - Aggregate application I/O requirements for the environment and size them accordingly.

As you consolidate multiple workloads onto a set of ESX servers that have a shared pool of storage, don’t exceed the total throughput capacity of that storage resource. Looking at the throughput characterization of physical environment prior to virtualization can help you predict what throughput each workload will generate in the virtual environment. - Base your storage choices on your I/O workload.

Use an aggregation of the measured workload to determine what protocol, redundancy protection and array features to use, rather than using an estimate. The best results come from measuring your applications I/O throughput and capacity for a period of several days prior to moving them to a virtualized environment. - Remember that pooling storage resources increases utilization and simplifies management, but can lead to contention.

There are significant benefits to pooling storage resources, including increased storage resource utilization and ease of management. However, at times, heavy workloads can have an impact on performance. It’s a good idea to use a shared VMFS volume for most virtual disks, but consider placing heavy I/O virtual disks on a dedicated VMFS volume or an RDM to reduce the effects of contention.

More information

VMware Virtual Machine File System: Technical Overview and Best Practices http://www.vmware.com/pdf/vmfs-best-practices-wp.pdf

Understand use cases for Raw Device Mapping

Official Documentation: Chapter 14 in the vSphere Storage Guide is dedicated to Raw Device Mappings (starting page 135).

This chapter starts with an introduction about RDMs and discusses the Characteristics and concludes with information how to create RDMs and how to manage paths for a mapped Raw LUN.

Summary:

Raw device mapping (RDM) provides a mechanism for a virtual machine to have direct access to a LUN on the physical storage subsystem (Fibre Channel or iSCSI only).

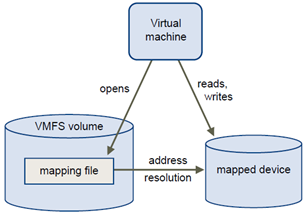

An RDM is a mapping file in a separate VMFS volume that acts as a proxy for a raw physical storage device. The RDM allows a virtual machine to directly access and use the storage device. The RDM contains metadata for managing and redirecting disk access to the physical device.

The file gives you some of the advantages of direct access to a physical device while keeping some advantages of a virtual disk in VMFS. As a result, it merges VMFS manageability with raw device access.

RDMs can be described in terms such as mapping a raw device into a datastore, mapping a system LUN, or mapping a disk file to a physical disk volume. All these terms refer to RDMs.

Although VMware recommends that you use VMFS datastores for most virtual disk storage, on certain occasions, you might need to use raw LUNs or logical disks located in a SAN.

For example, you need to use raw LUNs with RDMs in the following situations:

- When SAN snapshot or other layered applications run in the virtual machine. The RDM better enables scalable backup offloading systems by using features inherent to the SAN.

- In any MSCS clustering scenario that spans physical hosts — virtual-to-virtual clusters as well as physical-to-virtual clusters. In this case, cluster data and quorum disks should be configured as RDMs rather than as virtual disks on a shared VMFS.

Think of an RDM as a symbolic link from a VMFS volume to a raw LUN. The mapping makes LUNs appear as files in a VMFS volume. The RDM, not the raw LUN, is referenced in the virtual machine configuration. The RDM contains a reference to the raw LUN.

Using RDMs, you can:

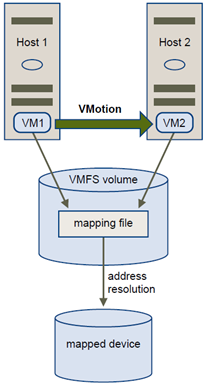

- Use vMotion to migrate virtual machines using raw LUNs.

- Add raw LUNs to virtual machines using the vSphere Client.

- Use file system features such as distributed file locking, permissions, and naming.

Two compatibility modes are available for RDMs:

- Virtual compatibility mode allows an RDM to act exactly like a virtual disk file, including the use of snapshots.

- Physical compatibility mode allows direct access of the SCSI device for those applications that need lower level control.

Benefits of Raw Device Mapping

An RDM provides a number of benefits, but it should not be used in every situation. In general, virtual disk files are preferable to RDMs for manageability. However, when you need raw devices, you must use the RDM. RDM offers several benefits.

| User-Friendly Persistent Names | Provides a user-friendly name for a mapped device. When you use an RDM,you do not need to refer to the device by its device name. You refer to it by thename of the mapping file, for example:/vmfs/volumes/myVolume/myVMDirectory/myRawDisk.vmdk |

| Dynamic Name Resolution | Stores unique identification information for each mapped device. VMFSassociates each RDM with its current SCSI device, regardless of changes in thephysical configuration of the server because of adapter hardware changes, pathchanges, device relocation, and so on. |

| Distributed File Locking | Makes it possible to use VMFS distributed locking for raw SCSI devices.Distributed locking on an RDM makes it safe to use a shared raw LUN withoutlosing data when two virtual machines on different servers try to access thesame LUN. |

| File Permissions | Makes file permissions possible. The permissions of the mapping file areenforced at file-open time to protect the mapped volume. |

| File System Operations | Makes it possible to use file system utilities to work with a mapped volume,using the mapping file as a proxy. Most operations that are valid for an ordinaryfile can be applied to the mapping file and are redirected to operate on themapped device. |

| Snapshots | Makes it possible to use virtual machine snapshots on a mapped volume.Snapshots are not available when the RDM is used in physical compatibilitymode. |

| vMotion | Lets you migrate a virtual machine with vMotion. The mapping file acts as aproxy to allow vCenter Server to migrate the virtual machine by using the samemechanism that exists for migrating virtual disk files.

|

| SAN Management Agents | Makes it possible to run some SAN management agents inside a virtualmachine. Similarly, any software that needs to access a device by usinghardware-specific SCSI commands can be run in a virtual machine. This kindof software is called SCSI target-based software. When you use SANmanagement agents, select a physical compatibility mode for the RDM. |

| N-Port ID Virtualization (NPIV) | Makes it possible to use the NPIV technology that allows a single Fibre ChannelHBA port to register with the Fibre Channel fabric using several worldwideport names (WWPNs). This ability makes the HBA port appear as multiplevirtual ports, each having its own ID and virtual port name. Virtual machinescan then claim each of these virtual ports and use them for all RDM traffic.NOTE You can use NPIV only for virtual machines with RDM disks. |

Limitations of Raw Device Mapping

Certain limitations exist when you use RDMs.

- The RDM is not available for direct-attached block devices or certain RAID devices. The RDM uses a SCSI serial number to identify the mapped device. Because block devices and some direct-attach RAID devices do not export serial numbers, they cannot be used with RDMs.

- If you are using the RDM in physical compatibility mode, you cannot use a snapshot with the disk. Physical compatibility mode allows the virtual machine to manage its own, storage-based, snapshot or mirroring operations.

Virtual machine snapshots are available for RDMs with virtual compatibility mode.

- You cannot map to a disk partition. RDMs require the mapped device to be a whole LUN.

RDM Virtual and Physical Compatibility Modes

You can use RDMs in virtual compatibility or physical compatibility modes. Virtual mode specifies full virtualization of the mapped device. Physical mode specifies minimal SCSI virtualization of the mapped device, allowing the greatest flexibility for SAN management software.

In virtual mode, the VMkernel sends only READ and WRITE to the mapped device. The mapped device appears to the guest operating system exactly the same as a virtual disk file in a VMFS volume. The real hardware characteristics are hidden. If you are using a raw disk in virtual mode, you can realize the benefits of VMFS such as advanced file locking for data protection and snapshots for streamlining development processes. Virtual mode is also more portable across storage hardware than physical mode, presenting the same behavior as a virtual disk file.

In physical mode, the VMkernel passes all SCSI commands to the device, with one exception: the REPORT LUNs command is virtualized so that the VMkernel can isolate the LUN to the owning virtual machine. Otherwise, all physical characteristics of the underlying hardware are exposed. Physical mode is useful to run SAN management agents or other SCSI target-based software in the virtual machine. Physical mode also allows virtual-to-physical clustering for cost-effective high availability.

VMFS5 supports greater than 2TB disk size for RDMs in physical compatibility mode only. The following restrictions apply:

- You cannot relocate larger than 2TB RDMs to datastores other than VMFS5.

- You cannot convert larger than 2TB RDMs to virtual disks, or perform other operations that involve RDM to virtual disk conversion. Such operations include cloning.

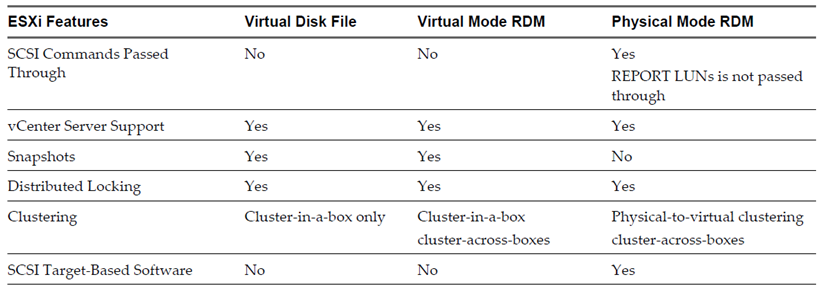

Comparing Available SCSI Device Access Modes

The ways of accessing a SCSI-based storage device include a virtual disk file on a VMFS datastore, virtual mode RDM, and physical mode RDM.

To help you choose among the available access modes for SCSI devices, the following table provides a quick comparison of features available with the different modes.

VMware recommends that you use virtual disk files for the cluster-in-a-box type of clustering. If you plan to reconfigure your cluster-in-a-box clusters as cluster-across-boxes clusters, use virtual mode RDMs for the cluster-in-a-box clusters.

Create Virtual Machines with RDMs

When you give your virtual machine direct access to a raw SAN LUN, you create a mapping file (RDM) that resides on a VMFS datastore and points to the LUN. Although the mapping file has the same.vmdk extension as a regular virtual disk file, the RDM file contains only mapping information. The actual virtual disk data is stored directly on the LUN.

You can create the RDM as an initial disk for a new virtual machine or add it to an existing virtual machine. When creating the RDM, you specify the LUN to be mapped and the datastore on which to put the RDM.

Procedure

- Follow all steps required to create a custom virtual machine.

- In the Select a Disk page, select Raw Device Mapping, and click Next.

- From the list of SAN disks or LUNs, select a raw LUN for your virtual machine to access directly.

- Select a datastore for the RDM mapping file.

You can place the RDM file on the same datastore where your virtual machine configuration file resides, or select a different datastore.

NOTE To use vMotion for virtual machines with enabled NPIV, make sure that the RDM files of the virtual machines are located on the same datastore. You cannot perform Storage vMotion when NPIV is enabled. - Select a compatibility mode.

| Option | Description |

| Physical | Allows the guest operating system to access the hardware directly. Physicalcompatibility is useful if you are using SAN-aware applications on the virtualmachine. However, powered on virtual machines that use RDMs configuredfor physical compatibility cannot be migrated if the migration involvescopying the disk. Such virtual machines cannot be cloned or cloned to atemplate either. |

| Virtual | Allows the RDM to behave as if it were a virtual disk, so you can use suchfeatures as snapshotting, cloning, and so on. |

- Select a virtual device node.

- If you select Independent mode, choose one of the following.

| Option | Description |

| Persistent | Changes are immediately and permanently written to the disk. |

| Nonpersistent | Changes to the disk are discarded when you power off or revert to thesnapshot. |

- Click Next.

- In the Ready to Complete New Virtual Machine page, review your selections.

- Click Finish to complete your virtual machine.

More information

http://www.douglassmith.me/2010/07/understand-use-cases-for-raw-device-mapping/

Performance Study “Performance Characterization of VMFS and RDM Using a SAN”

Configure vCenter Server storage filters

Official Documentation: vSphere Storage Guide, Chapter 13 “Working with Datastores”, page 125.

When you perform VMFS datastore management operations, vCenter Server uses default storage protection filters. The filters help you to avoid storage corruption by retrieving only the storage devices that can be used for a particular operation. Unsuitable devices are not displayed for selection. You can turn off the filters to view all devices.

Before making any changes to the device filters, consult with the VMware support team. You can turn off the filters only if you have other methods to prevent device corruption.

Procedure

- In the vSphere Client, select Administration > vCenter Server Settings.

- In the settings list, select Advanced Settings.

- In the Key text box, type a key.

| Key | Filter Name | Description |

| config.vpxd.filter.vmfsFilter | VMFS Filter | Filters out storage devices, or LUNs, that are already used by a VMFS datastore on any host managed by vCenter Server. The LUNs do not show up as candidates to be formatted with another VMFS datastore or to be used as an RDM. |

| config.vpxd.filter.rdmFilter | RDM Filter | Filters out LUNs that are already referenced by an RDM on any host managed by vCenter Server. The LUNs do not show up as candidates to be formatted with VMFS or to be used by a different RDM.If you need virtual machines to access the same LUN, the virtual machines must share the same RDM mapping file. For information about this type of configuration, see the vSphere Resource Management documentation. |

| config.vpxd.filter.SameHostAndTransportsFilter | Same Host and Transports Filter | Filters out LUNs ineligible for use as VMFS datastore extents because of host or storage type incompatibility.Prevents you from adding the following LUNs as extents:

|

| config.vpxd.filter.hostRescanFilter | Host Rescan FilterNOTE If you turn off the Host Rescan Filter, your hosts continue to performa rescan each time you present a new LUN to a host or a cluster. | Automatically rescans and updates VMFS datastoresafter you perform datastore management operations.The filter helps provide a consistent view of all VMFSdatastores on all hosts managed by vCenter Server.NOTE If you present a new LUN to a host or a cluster,the hosts automatically perform a rescan no matterwhether you have the Host Rescan Filter on or off. |

- In the Value text box, type False for the specified key.

- Click Add.

- Click OK.

You are not required to restart the vCenter Server system.

Other references:

- Yellow Bricks on Storage Filters: http://www.yellow-bricks.com/2010/08/11/storage-filters/

Understand and apply VMFS re-signaturing

Official Documentation: vSphere Storage Guide, Chapter 13 “Working with Datastores”, page 122.

Use datastore resignaturing if you want to retain the data stored on the VMFS datastore copy.

When resignaturing a VMFS copy, ESXi assigns a new UUID and a new label to the copy, and mounts the copy as a datastore distinct from the original.

The default format of the new label assigned to the datastore is snap-snapID-oldLabel, where snapID is an integer and oldLabel is the label of the original datastore.

When you perform datastore resignaturing, consider the following points:

- Datastore resignaturing is irreversible.

- The LUN copy that contains the VMFS datastore that you resignature is no longer treated as a LUN copy.

- A spanned datastore can be resignatured only if all its extents are online.

- The resignaturing process is crash and fault tolerant. If the process is interrupted, you can resume it later.

- You can mount the new VMFS datastore without a risk of its UUID colliding with UUIDs of any other datastore, such as an ancestor or child in a hierarchy of LUN snapshots.

Prerequisites

To resignature a mounted datastore copy, first unmount it.

Before you resignature a VMFS datastore, perform a storage rescan on your host so that the host updates its view of LUNs presented to it and discovers any LUN copies.

Procedure

- Log in to the vSphere Client and select the server from the inventory panel.

- Click the Configuration tab and click Storage in the Hardware panel.

- Click Add Storage.

- Select the Disk/LUN storage type and click Next.

- From the list of LUNs, select the LUN that has a datastore name displayed in the VMFS Label column and click Next. The name present in the VMFS Label column indicates that the LUN is a copy that contains a copy of an existing VMFS datastore.

- Under Mount Options, select Assign a New Signature and click Next.

- In the Ready to Complete page, review the datastore configuration information and click Finish.

What to do next

After resignaturing, you might have to do the following:

- If the resignatured datastore contains virtual machines, update references to the original VMFS datastore in the virtual machine files, including .vmx, .vmdk, .vmsd, and .vmsn.

- To power on virtual machines, register them with vCenter Server.

Other references:

Understand and apply LUN masking using PSA-related commands

Official Documentation: vSphere Storage Guide, Chapter 17 “Understanding Multipathing and Failover”, page 169.

Mask Paths

You can prevent the host from accessing storage devices or LUNs or from using individual paths to a LUN.

Use the esxcli commands to mask the paths. When you mask paths, you create claim rules that assign the MASK_PATH plug-in to the specified paths.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

- Check what the next available rule ID is.

esxcli –server=server_name storage core claimrule list

The claim rules that you use to mask paths should have rule IDs in the range of 101 – 200. If this command shows that rule 101 and 102 already exist, you can specify 103 for the rule to add. - Assign the MASK_PATH plug-in to a path by creating a new claim rule for the plug-in.

esxcli –server=server_name storage core claimrule add -P MASK_PATH - Load the MASK_PATH claim rule into your system.

esxcli –server=server_name storage core claimrule load - Verify that the MASK_PATH claim rule was added correctly.

esxcli –server=server_name storage core claimrule list - If a claim rule for the masked path exists, remove the rule.

esxcli –server=server_name storage core claiming unclaim - Run the path claiming rules.

esxcli –server=server_name storage core claimrule run

After you assign the MASK_PATH plug-in to a path, the path state becomes irrelevant and is no longer

maintained by the host. As a result, commands that display the masked path’s information might show the

path state as dead.

Unmask Paths

When you need the host to access the masked storage device, unmask the paths to the device.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

- Delete the MASK_PATH claim rule.

esxcli –server=server_name storage core claimrule remove -r rule# - Verify that the claim rule was deleted correctly.

esxcli –server=server_name storage core claimrule list - Reload the path claiming rules from the configuration file into the VMkernel.

esxcli –server=server_name storage core claimrule load -

Run the esxcli –server=server_name storage core claiming unclaim command for each path to the masked storage device.

For example:esxcli –server=server_name storage core claiming unclaim -t location -A vmhba0 -C 0 -T 0 -L 149

- Run the path claiming rules.

esxcli –server=server_name storage core claimrule run

Your host can now access the previously masked storage device.

Other references:

- VMware KB 1009449 “Masking a LUN from ESX and ESXi using the MASK_PATH plug-in”: http://kb.vmware.com/kb/1009449

- VMware KB 1015252 “Unable to claim the LUN back after unmasking it”: http://kb.vmware.com/kb/1015252

- VMware KB 1014953 “Identifying disks when working with VMware ESX”: http://kb.vmware.com/kb/1014953

- Blogpost Jason Langer http://virtuallanger.wordpress.com/2010/10/08/understand-and-apply-lun-masking-using-psa-related-commands/

Analyze I/O workloads to determine storage performance requirements

There is a document from VMware called Storage Workload Characterization and Consolidation in Virtualized

Environments, with a lot of info.

Josh Townsend created a series of blog posts on his blog VMToday about everything you need to know about storage. See:

- Storage Basics – Part I: An Introduction

- Storage Basics – Part II: IOPS

- Storage Basics – Part III: RAID

- Storage Basics – Part IV: Interface

- Storage Basics – Part V: Controllers, Cache and Coalescing

- Storage Basics – Part VI: Storage Workload Characterization

- Storage Basics – Part VII: Storage Alignment

Identify and tag SSD devices

Official Documentation: vSphere Storage Guide, Chapter 15 “Solid State Disks Enablement”, page 143.

In addition to regular hard disk drives, ESXi supports Solid State Disks (SSDs).

Unlike the regular hard disks that are electromechanical devices containing moving parts, SSDs use semiconductors as their storage medium and have no moving parts.

On several storage arrays, the ESXi host can automatically distinguish SSDs from traditional hard disks. To tag the SSD devices that are not detected automatically, you can use PSA SATP claim rules.

Benefits of SSD Enablement

SSDs are very resilient and provide faster access to data.

SSD enablement results in several benefits:

- It enables usage of SSD as swap space for improved system performance. For information about using SSD datastores to allocate space for host cache, see the vSphere Resource Management documentation.

- It increases virtual machine consolidation ratio as SSDs can provide very high I/O throughput.

- It supports identification of virtual SSD device by the guest operating system.

Auto-Detection of SSD Devices

ESXi enables automatic detection of SSD devices by using an inquiry mechanism based on T10 standards.

ESXi enables detection of the SSD devices on a number of storage arrays. Check with your vendor whether your storage array supports ESXi SSD device detection.

You can use PSA SATP claim rules to tag devices that cannot be auto-detected.

Identifying a Virtual SSD Device

ESXi allows operating systems to auto-detect VMDKs residing on SSD datastores as SSD devices.

To verify if this feature is enabled, guest operating systems can use standard inquiry commands such as SCSI VPD Page (B1h) for SCSI devices and ATA IDENTIFY DEVICE (Word 217) for IDE devices.

For linked clones, native snapshots, and delta-disks, the inquiry commands report the virtual SSD status of the base disk.

Operating systems can auto-detect a VMDK as SSD under the following conditions:

- Detection of virtual SSDs is supported on ESXi 5 hosts and Virtual Hardware version 8.

- Detection of virtual SSDs is supported only on VMFS5 or later.

- If VMDKs are located on shared VMFS datastores with SSD device extents, the device must be marked as SSD on all hosts.

- For a VMDK to be detected as virtual SSD, all underlying physical extents should be SSD-backed.

Best Practices for SSD Devices

Follow these best practices when you use SSD devices in vSphere environment.

- Use datastores that are created on SSD storage devices to allocate space for ESXi host cache. For more information see the vSphere Resource Management documentation.

- Make sure to use the latest firmware with SSD devices. Frequently check with your storage vendors for any updates.

- Carefully monitor how intensively you use the SSD device and calculate its estimated lifetime. The lifetime expectancy depends on how actively you continue to use the SSD device.

Estimate SSD Lifetime

When working with SSDs, monitor how actively you use them and calculate their estimated lifetime.

Typically, storage vendors provide reliable lifetime estimates for an SSD under ideal conditions. For example, a vendor might guarantee a lifetime of 5 years under the condition of 20GB writes per day. However, the more realistic life expectancy of the SSD will depend on how many writes per day your host actually generates.

Follow these steps to calculate the lifetime of the SSD.

Procedure

- Obtain the number of writes on the SSD by running the

esxcli storage core device stats get -d=device_ID command. The Write Operations item in the output shows the number. You can average this number over a period of time. -

Estimate lifetime of your SSD by using the following formula:

vendor provided number of writes per day times vendor provided life span divided by actual average number of writes per day For example, if your vendor guarantees a lifetime of 5 years under the condition of 20GB writes per day, and the actual number of writes per day is 30GB, the life span of your SSD will be approximately 3.3 years.

Administer hardware acceleration for VAAI

Official Documentation: vSphere Storage Guide, Chapter 18 “Storage Hardware Acceleration”, page 173 is dedicated to VAAI

The hardware acceleration functionality enables the ESXi host to integrate with compliant storage arrays and offload specific virtual machine and storage management operations to storage hardware. With the storage hardware assistance, your host performs these operations faster and consumes less CPU, memory, and storage fabric bandwidth.

Hardware Acceleration Benefits

When the hardware acceleration functionality is supported, the host can get hardware assistance and perform several tasks faster and more efficiently.

The host can get assistance with the following activities:

- Migrating virtual machines with Storage vMotion

- Deploying virtual machines from templates

- Cloning virtual machines or templates

- VMFS clustered locking and metadata operations for virtual machine files

- Writes to thin provisioned and thick virtual disks

- Creating fault-tolerant virtual machines

- Creating and cloning thick disks on NFS datastores

Hardware Acceleration Requirements

The hardware acceleration functionality works only if you use an appropriate host and storage array combination.

| ESXi | Block Storage Devices | NAS Devices |

| ESX/ESXi version 4.1 | Support block storage plug-ins forarray integration (VAAI) | Not supported |

| ESXi version 5.0 | Support T10 SCSI standard or blockstorage plug-ins for array integration(VAAI) | Support NAS plug-ins for arrayintegration |

NOTE If your SAN or NAS storage fabric uses an intermediate appliance in front of a storage system that supports hardware acceleration, the intermediate appliance must also support hardware acceleration and be properly certified. The intermediate appliance might be a storage virtualization appliance, I/O acceleration appliance, encryption appliance, and so on.

Hardware Acceleration Support Status

For each storage device and datastore, the vSphere Client displays the hardware acceleration support status in the Hardware Acceleration column of the Devices view and the Datastores view.

The status values are Unknown, Supported, and Not Supported. The initial value is Unknown.

For block devices, the status changes to Supported after the host successfully performs the offload operation. If the offload operation fails, the status changes to Not Supported. The status remains Unknown if the device

provides partial hardware acceleration support.

With NAS, the status becomes Supported when the storage can perform at least one hardware offload operation.

When storage devices do not support or provide partial support for the host operations, your host reverts to its native methods to perform unsupported operations.

Hardware Acceleration for Block Storage Devices

With hardware acceleration, your host can integrate with block storage devices, Fibre Channel or iSCSI, and use certain storage array operations.

ESXi hardware acceleration supports the following array operations:

- Full copy, also called clone blocks or copy offload. Enables the storage arrays to make full copies of data within the array without having the host read and write the data. This operation reduces the time and network load when cloning virtual machines, provisioning from a template, or migrating with vMotion.

- Block zeroing, also called write same. Enables storage arrays to zero out a large number of blocks to provide newly allocated storage, free of previously written data. This operation reduces the time and network load when creating virtual machines and formatting virtual disks.

- Hardware assisted locking, also called atomic test and set (ATS). Supports discrete virtual machine locking without use of SCSI reservations. This operation allows disk locking per sector, instead of the entire LUN as with SCSI reservations.

Check with your vendor for the hardware acceleration support. Certain storage arrays require that you activate

the support on the storage side.

On your host, the hardware acceleration is enabled by default. If your storage does not support the hardware acceleration, you can disable it using the vSphere Client.

Disable Hardware Acceleration for Block Storage Devices

On your host, the hardware acceleration for block storage devices is enabled by default. You can use the vSphere Client advanced settings to disable the hardware acceleration operations.

As with any advanced settings, before you disable the hardware acceleration, consult with the VMware support team.

Procedure

- In the vSphere Client inventory panel, select the host.

- Click the Configuration tab, and click Advanced Settings under Software.

-

Change the value for any of the options to 0 (disabled):

- VMFS3.HardwareAcceleratedLocking

- DataMover.HardwareAcceleratedMove

- DataMover.HardwareAcceleratedInit

Managing Hardware Acceleration on Block Storage Devices

To integrate with the block storage arrays and to benefit from the array hardware operations, vSphere uses the ESXi extensions referred to as Storage APIs – Array Integration, formerly called VAAI.

In the vSphere 5.0 release, these extensions are implemented as the T10 SCSI based commands. As a result, with the devices that support the T10 SCSI standard, your ESXi host can communicate directly and does not require the VAAI plug-ins.

If the device does not support T10 SCSI or provides partial support, ESXi reverts to using the VAAI plug-ins, installed on your host, or uses a combination of the T10 SCSI commands and plug-ins. The VAAI plug-ins are vendor-specific and can be either VMware or partner developed. To manage the VAAI capable device, your host attaches the VAAI filter and vendor-specific VAAI plug-in to the device.

For information about whether your storage requires VAAI plug-ins or supports hardware acceleration through T10 SCSI commands, see the vSphere Compatibility Guide or check with your storage vendor.

You can use several esxcli commands to query storage devices for the hardware acceleration support information. For the devices that require the VAAI plug-ins, the claim rule commands are also available.

Display Hardware Acceleration Plug-Ins and Filter

To communicate with the devices that do not support the T10 SCSI standard, your host uses a combination of a single VAAI filter and a vendor-specific VAAI plug-in. Use the esxcli command to view the hardware acceleration filter and plug-ins currently loaded into your system.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

- Run the esxcli –server=server_name storage core plugin list –plugin-class=value command.

For value, enter one of the following options: -

Type VAAI to display plug-ins.

The output of this command is similar to the following example:

#esxcli –server=server_name storage core plugin list –plugin-class=VAAI

| Plugin name | Plugin class |

| VMW_VAAIP_EQL | VAAI |

| VMW_VAAIP_NETAPP | VAAI |

| VMW_VAAIP_CX | VAAI |

-

Type Filter to display the Filter.

The output of this command is similar to the following example:

esxcli –server=server_name storage core plugin list –plugin-class=Filter

| Plugin name | Plugin class |

| VAAI_FILTER | Filter |

Verify Hardware Acceleration Support Status

Use the esxcli command to verify the hardware acceleration support status of a particular storage device.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

-

Run the esxcli –server=server_name storage core device list -d=device_ID command.

The output shows the hardware acceleration, or VAAI, status that can be unknown, supported, or unsupported.

# esxcli –server=server_name storage core device list -d naa.XXXXXXXXXXXX4c

naa.XXXXXXXXXXXX4c

Display Name: XXXX Fibre Channel Disk(naa.XXXXXXXXXXXX4c)

Size: 20480

Device Type: Direct-Access

Multipath Plugin: NMP

XXXXXXXXXXXXXXXX

Attached Filters: VAAI_FILTER

VAAI Status: supported

XXXXXXXXXXXXXXXX

Verify Hardware Acceleration Support Details

Use the esxcli command to query the block storage device about the hardware acceleration support the device provides.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

-

Run the esxcli –server=server_name storage core device vaai status get -d=device_ID command.

If the device is managed by a VAAI plug-in, the output shows the name of the plug-in attached to the device. The output also shows the support status for each T10 SCSI based primitive, if available. Output appears in the following example:

# esxcli –server=server_name storage core device vaai status get -d naa.XXXXXXXXXXXX4c

naa.XXXXXXXXXXXX4c

VAAI Plugin Name: VMW_VAAIP_SYMM

ATS Status: supported

Clone Status: supported

Zero Status: supported

Delete Status: unsupported

List Hardware Acceleration Claim Rules

Each block storage device managed by a VAAI plug-in needs two claim rules, one that specifies the hardware acceleration filter and another that specifies the hardware acceleration plug-in for the device. You can use the esxcli commands to list the hardware acceleration filter and plug-in claim rules.

Procedure

-

To list the filter claim rules, run the

esxcli –server=server_name storage core claimrule list –claimrule-class=Filter command.

In this example, the filter claim rules specify devices that should be claimed by the VAAI_FILTER filter.

# esxcli –server=server_name storage core claimrule list –claimrule-class=Filter

| Rule Class | Rule | Class | Type | Plugin | Matches |

| Filter | 65430 | runtime | vendor | VAAI_FILTER | vendor=EMC model=SYMMETRIX |

| Filter | 65430 | file | vendor | VAAI_FILTER | vendor=EMC model=SYMMETRIX |

| Filter | 65431 | runtime | vendor | VAAI_FILTER | vendor=DGC model=* |

| Filter | 65431 | file | vendor | VAAI_FILTER | vendor=DGC model=* |

-

To list the VAAI plug-in claim rules, run the

esxcli –server=server_name storage core claimrule list –claimrule-class=VAAI command.

In this example, the VAAI claim rules specify devices that should be claimed by a particular VAAI plugin.

esxcli –server=server_name storage core claimrule list –claimrule-class=VAAI

| Rule Class | Rule | Class | Type | Plugin | Matches |

| VAAI | 65430 | runtime | vendor | VMW_VAAIP_SYMM | vendor=EMC model=SYMMETRIX |

| VAAI | 65430 | file | vendor | VMW_VAAIP_SYMM | vendor=EMC model=SYMMETRIX |

| VAAI | 65431 | runtime | vendor | VMW_VAAIP_CX | vendor=DGC model=* |

| VAAI | 65431 | file | vendor | VMW_VAAIP_CX | vendor=DGC model=* |

Add Hardware Acceleration Claim Rules

To configure hardware acceleration for a new array, you need to add two claim rules, one for the VAAI filter and another for the VAAI plug-in. For the new claim rules to be active, you first define the rules and then load them into your system.

This procedure is for those block storage devices that do not support T10 SCSI commands and instead use the VAAI plug-ins.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

- Define a new claim rule for the VAAI filter by running the esxcli –server=server_name storage core claimrule add –claimrule-class=Filter — plugin=VAAI_FILTER command.

- Define a new claim rule for the VAAI plug-in by running the esxcli –server=server_name storage core claimrule add –claimrule-class=VAAI command.

- Load both claim rules by running the following commands:

esxcli –server=server_name storage core claimrule load –claimrule-class=Filter

esxcli –server=server_name storage core claimrule load –claimrule-class=VAAI - Run the VAAI filter claim rule by running the esxcli –server=server_name storage core claimrule run –claimrule-class=Filter command.

NOTE Only the Filter-class rules need to be run. When the VAAI filter claims a device, it automatically

finds the proper VAAI plug-in to attach.

Delete Hardware Acceleration Claim Rules

Use the esxcli command to delete existing hardware acceleration claim rules.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

-

Run the following commands:

esxcli –server=server_name storage core claimrule remove -r claimrule_ID –claimruleclass=Filter

esxcli –server=server_name storage core claimrule remove -r claimrule_ID –claimruleclass=VAAI

Hardware Acceleration on NAS Devices

Hardware acceleration allows your host to integrate with NAS devices and use several hardware operations that NAS storage provides.

The following list shows the supported NAS operations:

- File clone. This operation is similar to the VMFS block cloning except that NAS devices clone entire files instead of file segments.

- Reserve space. Enables storage arrays to allocate space for a virtual disk file in thick format. Typically, when you create a virtual disk on an NFS datastore, the NAS server determines the allocation policy. The default allocation policy on most NAS servers is thin and does not guarantee backing storage to the file. However, the reserve space operation can instruct the NAS device to use vendor-specific mechanisms to reserve space for a virtual disk of nonzero logical size.

- Extended file statistics. Enables storage arrays to accurately report space utilization for virtual machines.

With NAS storage devices, the hardware acceleration integration is implemented through vendor-specific NAS plug-ins. These plug-ins are typically created by vendors and are distributed as VIB packages through a web page. No claim rules are required for the NAS plug-ins to function.

There are several tools available for installing and upgrading VIB packages. They include the esxcli commands and vSphere Update Manager. For more information, see the vSphere Upgrade and Installing and Administering VMware vSphere Update Manager documentation.

Install NAS Plug-In

Install vendor-distributed hardware acceleration NAS plug-ins on your host.

This topic provides an example for a VIB package installation using the esxcli command. For more details, see the vSphere Upgrade documentation.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

- Place your host into the maintenance mode.

-

Set the host acceptance level:

esxcli –server=server_name software acceptance set –level=value

The command controls which VIB package is allowed on the host. The value can be one of the following:- VMwareCertified

- VMwareAccepted

- PartnerSupported

- CommunitySupported

- Install the VIB package:

esxcli –server=server_name software vib install -v|–viburl=URL

The URL specifies the URL to the VIB package to install. http:, https:, ftp:, and file: are supported. - Verify that the plug-in is installed:

esxcli –server=server_name software vib list -

Reboot your host for the installation to take effect.

Uninstall NAS Plug-Ins

To uninstall a NAS plug-in, remove the VIB package from your host.

This topic discusses how to uninstall a VIB package using the esxcli command. For more details, see the vSphere Upgrade documentation.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

- Uninstall the plug-in:

esxcli –server=server_name software vib remove -n|–vibname=name

The name is the name of the VIB package to remove. - Verify that the plug-in is removed:

esxcli –server=server_name software vib list - Reboot your host for the change to take effect.

Update NAS Plug-Ins

Upgrade hardware acceleration NAS plug-ins on your host when a storage vendor releases a new plug-in version.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

This topic discusses how to update a VIB package using the esxcli command. For more details, see the vSphere Upgrade documentation.

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

- Upgrade to a new plug-in version:

esxcli –server=server_name software vib update -v|–viburl=URL

The URL specifies the URL to the VIB package to update. http:, https:, ftp:, and file: are supported. - Verify that the correct version is installed:

esxcli –server=server_name software vib list - Reboot the host.

Verify Hardware Acceleration Status for NAS

In addition to the vSphere Client, you can use the esxcli command to verify the hardware acceleration status of the NAS device.

In the procedure, –server=server_name specifies the target server. The specified target server prompts you for a user name and password. Other connection options, such as a configuration file or session file, are supported. For a list of connection options, see Getting Started with vSphere Command-Line Interfaces.

Prerequisites

Install vCLI or deploy the vSphere Management Assistant (vMA) virtual machine. See Getting Started with vSphere Command-Line Interfaces. For troubleshooting , run esxcli commands in the ESXi Shell.

Procedure

- Run the esxcli –server=server_name storage nfs list command.

The Hardware Acceleration column in the output shows the status.

Hardware Acceleration Considerations

When you use the hardware acceleration functionality, certain considerations apply.

Several reasons might cause a hardware-accelerated operation to fail.

For any primitive that the array does not implement, the array returns an error. The error triggers the ESXi host to attempt the operation using its native methods.

The VMFS data mover does not leverage hardware offloads and instead uses software data movement when one of the following occurs:

- The source and destination VMFS datastores have different block sizes.

- The source file type is RDM and the destination file type is non-RDM (regular file).

- The source VMDK type is eagerzeroedthick and the destination VMDK type is thin.

- The source or destination VMDK is in sparse or hosted format.

- The source virtual machine has a snapshot.

- The logical address and transfer length in the requested operation are not aligned to the minimum alignment required by the storage device. All datastores created with the vSphere Client are aligned automatically.

- The VMFS has multiple LUNs or extents, and they are on different arrays.

Hardware cloning between arrays, even within the same VMFS datastore, does not work.

Configure and administer profile-based storage

Official Documentation: vSphere Storage Guide, Chapter 21 “Virtual Machine Storage profiles”, page 195. vSphere Storage Guide, Chapter 20 “Using Storage Vendor providers”, page 191.

When using vendor provider components, the vCenter Server can integrate with external storage, both block storage and NFS, so that you can gain a better insight into resources and obtain comprehensive and meaningful storage data.

The vendor provider is a software plug-in developed by a third party through the Storage APIs – Storage Awareness. The vendor provider component is typically installed on the storage array side and acts as a server in the vSphere environment. The vCenter Server uses vendor providers to retrieve information about storage topology, capabilities, and status.

Vendor Provider Requirements and Considerations

When you use the vendor provider functionality, certain requirements and considerations apply.

The vendor provider functionality is implemented as an extension to the VMware vCenter Storage Monitoring Service (SMS). Because the SMS is a part of the vCenter Server, the vendor provider functionality does not need special installation or enablement on the vCenter Server side.

To use vendor providers, follow these requirements:

- vCenter Server version 5.0 or later.

- ESX/ESXi hosts version 4.0 or later.

- Storage arrays that support Storage APIs – Storage Awareness plug-ins. The vendor provider component must be installed on the storage side. See the vSphere Compatibility Guide or check with your storage vendor.

NOTE Fibre Channel over Ethernet (FCoE) does not support vendor providers.

The following considerations exist when you use the vendor providers:

- Both block storage and file system storage devices can use vendor providers.

- Vendor providers can run anywhere, except the vCenter Server.

- Multiple vCenter Servers can simultaneously connect to a single instance of a vendor provider.

- A single vCenter Server can simultaneously connect to multiple different vendor providers. It is possible to have a different vendor provider for each type of physical storage device available to your host.

Prepare storage for maintenance

Official Documentation: vSphere Storage Guide, Chapter 13 “Working with Datastores”, page 128 describes how to unmount a VMFS or NFS Datastore

Unmount VMFS or NFS Datastores

When you unmount a datastore, it remains intact, but can no longer be seen from the hosts that you specify.

The datastore continues to appear on other hosts, where it remains mounted.

Do not perform any configuration operations that might result in I/O to the datastore while the unmount is in progress.

NOTE vSphere HA heartbeating does not prevent you from unmounting the datastore. If a datastore is used for heartbeating, unmounting it might cause the host to fail and restart any active virtual machine. If the heartbeating check fails, the vSphere Client displays a warning.

Prerequisites

- Before unmounting VMFS datastores, make sure that the following prerequisites are met:

- No virtual machines reside on the datastore.

- The datastore is not part of a datastore cluster.

- The datastore is not managed by Storage DRS.

- Storage I/O control is disabled for this datastore.

- The datastore is not used for vSphere HA heartbeating.

Procedure

- Display the datastores.

- Right-click the datastore to unmount and select Unmount.

-

If the datastore is shared, specify which hosts should no longer access the datastore.

- Deselect the hosts on which you want to keep the datastore mounted.

By default, all hosts are selected. - Click Next.

- Review the list of hosts from which to unmount the datastore, and click Finish.

- Deselect the hosts on which you want to keep the datastore mounted.

- Confirm that you want to unmount the datastore.

After you unmount a VMFS datastore, the datastore becomes inactive and is dimmed in the host’s datastore list. An unmounted NFS datastore no longer appears on the list.

NOTE The datastore that is unmounted from some hosts while being mounted on others, is shown as active in the Datastores and Datastore Clusters view.

Upgrade VMware storage infrastructure

Official Documentation: vSphere Storage Guide, Chapter 13 “Working with Datastores”, page 120 has a section on Upgrading VMFS Datastores.

If your datastores were formatted with VMFS2 or VMFS3, you can upgrade the datastores to VMFS5.

When you perform datastore upgrades, consider the following items:

- To upgrade a VMFS2 datastore, you use a two-step process that involves upgrading VMFS2 to VMFS3

first. Because ESXi 5.0 hosts cannot access VMFS2 datastores, use a legacy host, ESX/ESXi 4.x or earlier, to access the VMFS2 datastore and perform the VMFS2 to VMFS3 upgrade.

After you upgrade your VMFS2 datastore to VMFS3, the datastore becomes available on the ESXi 5.0 host, where you complete the process of upgrading to VMFS5.

- When you upgrade your datastore, the ESXi file-locking mechanism ensures that no remote host or local process is accessing the VMFS datastore being upgraded. Your host preserves all files on the datastore.

- The datastore upgrade is a one-way process. After upgrading your datastore, you cannot revert it back to its previous VMFS format.

An upgraded VMFS5 datastore differs from a newly formatted VMFS5.

| Characteristics | Upgraded VMFS5 | Formatted VMFS5 |

| File block size | 1, 2, 4, and 8MB | 1MB |

| Subblock size | 64KB | 8KB |

| Partition format | MBR. Conversion to GPT happens only after |

you expand the datastore.GPTDatastore limitsRetains limits of VMFS3 datastore.

Upgrade VMFS2 Datastores to VMFS3

If your datastore was formatted with VMFS2, you must first upgrade it to VMFS3. Because ESXi 5.0 hosts cannot access VMFS2 datastores, use a legacy host, ESX/ESXi 4.x or earlier, to access the VMFS2 datastore and perform the VMFS2 to VMFS3 upgrade.

Prerequisites

- Commit or discard any changes to virtual disks in the VMFS2 datastore that you plan to upgrade.

- Back up the VMFS2 datastore.

- Be sure that no powered on virtual machines are using the VMFS2 datastore.

- Be sure that no other ESXi host is accessing the VMFS2 datastore.

- To upgrade the VMFS2 file system, its file block size must not exceed 8MB.

Procedure

- Log in to the vSphere Client and select a host from the Inventory panel.

- Click the Configuration tab and click Storage.

- Select the datastore that uses the VMFS2 format.

- Click Upgrade to VMFS3.

- Perform a rescan on all hosts that see the datastore.

What to do next

After you upgrade your VMFS2 datastore to VMFS3, the datastore becomes available on the ESXi 5.0 host. You can now use the ESXi 5.0 host to complete the process of upgrading to VMFS5.

Upgrade VMFS3 Datastores to VMFS5

VMFS5 is a new version of the VMware cluster file system that provides performance and scalability improvements.

Prerequisites

- If you use a VMFS2 datastore, you must first upgrade it to VMFS3. Follow the instructions in “Upgrade VMFS2 Datastores to VMFS3,” on page 121.

- All hosts accessing the datastore must support VMFS5.

- Verify that the volume to be upgraded has at least 2MB of free blocks available and 1 free file descriptor.

Procedure

- Log in to the vSphere Client and select a host from the Inventory panel.

- Click the Configuration tab and click Storage.

- Select the VMFS3 datastore.

- Click Upgrade to VMFS5.

A warning message about host version support appears. - Click OK to start the upgrade.

The task Upgrade VMFS appears in the Recent Tasks list. - Perform a rescan on all hosts that are associated with the datastore.

Other exam notes

- The Saffageek VCAP5-DCA Objectives http://thesaffageek.co.uk/vcap5-dca-objectives/

- Paul Grevink The VCAP5-DCA diaries http://paulgrevink.wordpress.com/the-vcap5-dca-diaries/

- Edward Grigson VCAP5-DCA notes http://www.vexperienced.co.uk/vcap5-dca/

- Jason Langer VCAP5-DCA notes http://www.virtuallanger.com/vcap-dca-5/

- The Foglite VCAP5-DCA notes http://thefoglite.com/vcap-dca5-objective/

VMware vSphere official documentation

| VMware vSphere Basics Guide | html | epub | mobi | |

| vSphere Installation and Setup Guide | html | epub | mobi | |

| vSphere Upgrade Guide | html | epub | mobi | |

| vCenter Server and Host Management Guide | html | epub | mobi | |

| vSphere Virtual Machine Administration Guide | html | epub | mobi | |

| vSphere Host Profiles Guide | html | epub | mobi | |

| vSphere Networking Guide | html | epub | mobi | |

| vSphere Storage Guide | html | epub | mobi | |

| vSphere Security Guide | html | epub | mobi | |

| vSphere Resource Management Guide | html | epub | mobi | |

| vSphere Availability Guide | html | epub | mobi | |

| vSphere Monitoring and Performance Guide | html | epub | mobi | |

| vSphere Troubleshooting | html | epub | mobi | |

| VMware vSphere Examples and Scenarios Guide | html | epub | mobi |

Disclaimer.

The information in this article is provided “AS IS” with no warranties, and confers no rights. This article does not represent the thoughts, intentions, plans or strategies of my employer. It is solely my opinion.